The Day Everything Broke

The Day Everything Broke

Day 5 was Monday — the first full workday with agents running in production. And everything broke.

Not catastrophically. Worse: silently.

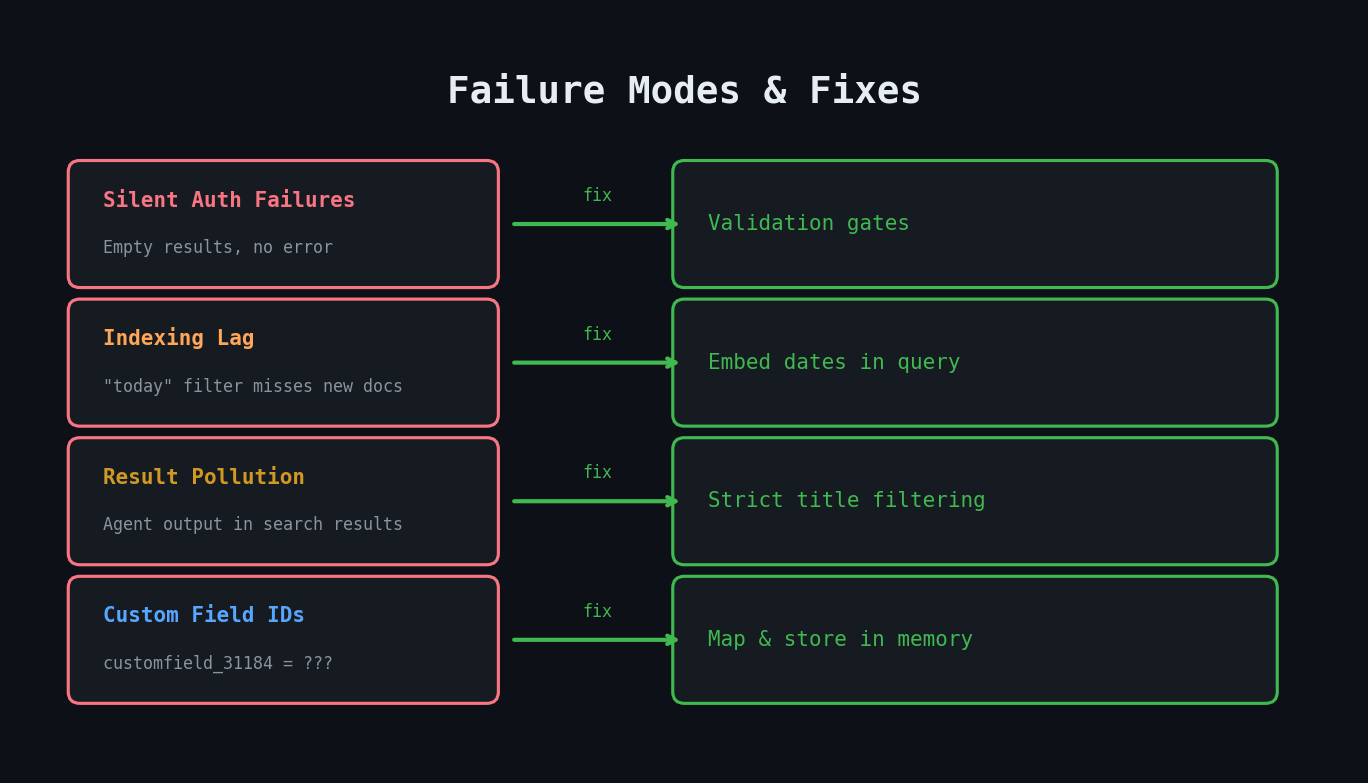

Failure Mode 1: Indexing Lag

My EOD summary agent searches for AI-generated meeting notes created during the day. It uses an enterprise search platform (Glean MCP) with a date filter: `updated: "today"`.

On Monday, the agent reported: "No meeting notes available for today."

I had 7 meetings. All of them had auto-generated notes. The notes existed — I could see them in Google Drive. But the search platform hadn't indexed them yet. The `updated: "today"` filter was checking the search index, not the source system. Indexing lag meant documents created in the afternoon weren't searchable until the next day.

The fix: Embed the date in the search query string itself instead of using a date filter.

``` // Before (broken) search({ query: "meeting notes", updated: "today" })

// After (working) search({ query: "Meeting Notes 2026/02/24" }) ```

This fix applied to three agents that searched for meeting notes. A single root cause, three points of failure.

Failure Mode 2: Silent Failures

The scariest part wasn't that the search failed — it's that the agent didn't tell me it failed. It found zero notes and just... produced a report without meeting context. The report looked normal at first glance. It had Jira data, sprint metrics, action items. But the meeting synthesis section was suspiciously thin.

I only noticed because I happened to compare the EOD report to my calendar. Without that manual check, I would have trusted an incomplete report.

The fix: Add expected yield guidance. "On a day with 7+ meetings, expect 4-7 meeting notes. If fewer than 3 are found, trigger fallback search strategies before proceeding."

Failure Mode 3: Result Pollution

After fixing the indexing lag, the search returned too many results — but the wrong kind. My agents save reports to Google Drive. The search platform indexes those reports. So when searching for "Meeting Notes 2026/02/24", the search returned:

- Real meeting notes (correct)

- My own EOD summary from the previous day that mentioned the date (wrong)

- My standup report that referenced meeting notes (wrong)

- A triage report that quoted meeting discussions (wrong)

The agent couldn't distinguish real meeting notes from its own previous output that referenced meeting content.

The fix: Five-rule filtering applied after every search:

- Title must match the expected note pattern (ending with "Notes by Gemini")

- Title must contain today's date in the exact format

- Document must be in the expected folder

- Document type must be "Docs" (not markdown or code files)

- Skip "similar results" that the search platform groups together

Failure Mode 4: Missing Fallbacks

When one search strategy fails, the agent should try alternatives before giving up. My original agents had one search query and no fallback. Day 5's fixes introduced a 3-step fallback chain:

- Specific search: exact meeting title + date

- Broad search: just date-based pattern

- AI-powered summary: ask the search platform's AI to summarize what it knows (handles cases where documents exist but are too large to return in full)

The Real Lesson: Validation Gates

Every agent now has validation gates — checkpoints where the agent verifies its own data before proceeding.

``` Step 1: Gather data Step 2: VALIDATION — Does the data volume match expectations? If 7 meetings but 0 notes → trigger fallback If search returned 20 results → apply filtering rules Step 3: Process validated data Step 4: Generate output ```

These gates catch the silent failures that would otherwise produce plausible-looking but incomplete reports.

Cost of Reliability

Day 5 cost $64 — less than the building days, but entirely spent on debugging and hardening. Zero new features. All reliability work.

This is the unglamorous part of agent development that nobody talks about. Day 1-4 are exciting: new agents, new capabilities, compound workflows. Day 5 is: "why does this search return my own reports as meeting notes?"

Key Takeaways

- Enterprise search indexing has lag — never rely on real-time `updated: "today"` filters for recently created documents

- Silent failures are worse than loud failures — agents should validate data volume against expectations

- Your agents' own output pollutes future searches — filter aggressively

- Every search needs a fallback chain, not a single query

- Reliability engineering is invisible work but determines whether your agents are actually trustworthy