Scaling to Full Program Coverage

Scaling to Full Program Coverage

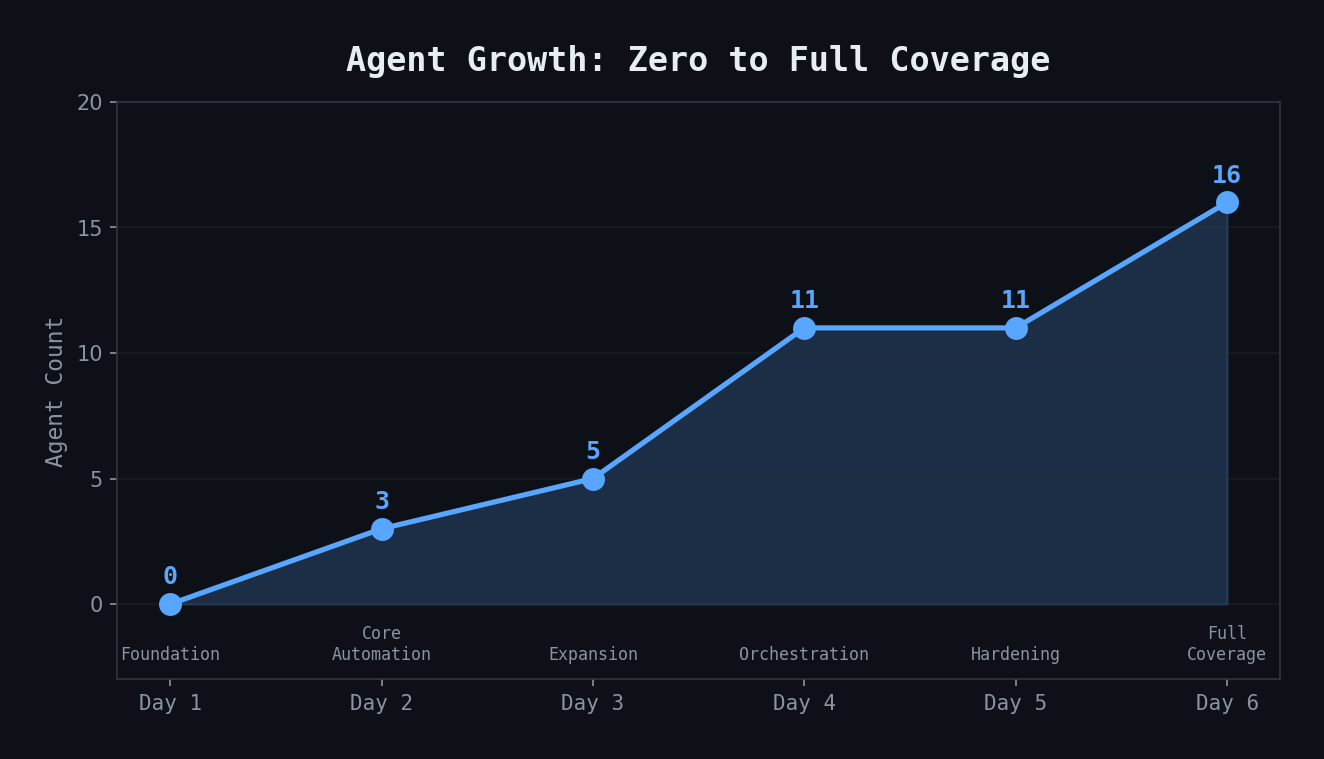

By Day 6, I had a working agent ecosystem for my primary project. But I manage three workstreams, not one. Day 6 was about scaling the pattern to cover everything.

The Coverage Gap

My agents covered:

- Primary project: Sprint board, standup sync, triage

- Cross-functional program: DCT program monitor (built Day 4)

- Missing: AI Pillar (two squads, 8 Slack channels, 7 Confluence pages, a dozen roadmap initiatives)

As a pillar lead, I need to know: What are the AI squads working on? Are sprint goals on track? Which initiatives need attention? Are there blockers I should escalate?

AI Pillar Monitor

The `ai-pillar-monitor` agent scans:

- 8 Slack channels (4 high-priority, 4 medium-priority) for activity, decisions, blockers

- Jira roadmap initiatives filtered to AI-related work

- 7 Confluence pages: Sprint goals, action items, risk register, operating model, team docs

It produces a cross-referenced digest. If a Slack conversation mentions a blocker but the corresponding Jira initiative doesn't reflect it, that gets flagged. If sprint goals are at risk based on ticket velocity, that surfaces in the report.

I also built an `ai-sprint-goals-publisher` that maps roadmap initiatives to sprint rows on a Confluence page — a manual task I was doing every two weeks that took 30 minutes of copy-pasting.

Initiative Health Auditing

This was the surprise of Day 6. I discovered that Jira roadmap initiatives have a "Notes" field that initiative owners are supposed to update regularly. Leadership reads these notes in a biweekly operating report. Stale notes = stale communication = bad look for the team.

I built two auditing agents:

- initiative-notes-checker: Scans three pillar areas, parses each initiative's Notes field, classifies freshness (Fresh/Stale/Empty), and generates a "Who to Nudge" report

- initiative-notes-checker-all: Same concept but scans ALL pillars across the quarter

The freshness classification:

``` FRESH: Note updated within 7 days STALE: Note older than 7 days EMPTY: No note at all NO_DATE: Note exists but has no timestamp ```

The agents also flag misalignments: deactivated owners still assigned to active initiatives, low confidence scores without explanatory notes, past-due target dates with no status update.

Jira Custom Field Discovery

Building the initiative auditing agents required discovering Jira's custom field IDs. Standard fields like `summary` and `status` are documented. But organization-specific fields like "Target Quarter" or "Primary Pillar" have opaque IDs like `customfield_31184`.

I found them by:

- Using the Atlassian MCP's field metadata endpoint

- Querying a known initiative and examining all returned fields

- Mapping human-readable names to field IDs

I documented all seven custom fields in my `MEMORY.md` so every agent could reuse them:

```

- Notes: customfield_10310 (ADF textarea)

- Confidence Score: customfield_31576 (select: 1-5)

- Target Quarter: customfield_31184 (select)

- Primary Pillar: customfield_33869 (select) ```

This is the kind of tribal knowledge that makes or breaks an agent ecosystem. Without these mappings, agents can't read or filter on the fields that matter most.

Knowledge Base Skill

I also created a domain knowledge skill — a comprehensive reference for my organization's product development lifecycle, governance processes, and team structure. This doesn't power an agent directly, but it gives Claude Code deep context when I ask questions about how things work.

The Inventory

By end of Day 6: 16 agents, 35 skills, full pillar coverage. Every workstream I manage has at least one dedicated agent monitoring it.

Highest Cost Day

Day 6 cost $104 — the most expensive day in the entire 8-day journey. The AI pillar monitor is resource-intensive (8 Slack channels + 7 Confluence pages + Jira queries), and I ran the daily briefing agent 6 times while iterating on the new pillar enrichment step.

Key Takeaways

- Scale by replicating patterns, not reinventing — the AI pillar monitor follows the same architecture as the DCT monitor

- Jira custom field IDs are critical tribal knowledge — document them in persistent memory for cross-agent reuse

- Initiative health auditing catches the invisible risks — stale notes and unassigned owners don't show up in sprint metrics

- Expect your highest-cost day when adding coverage for new workstreams — it's the exploration that's expensive

- A domain knowledge skill provides context that makes every other agent better