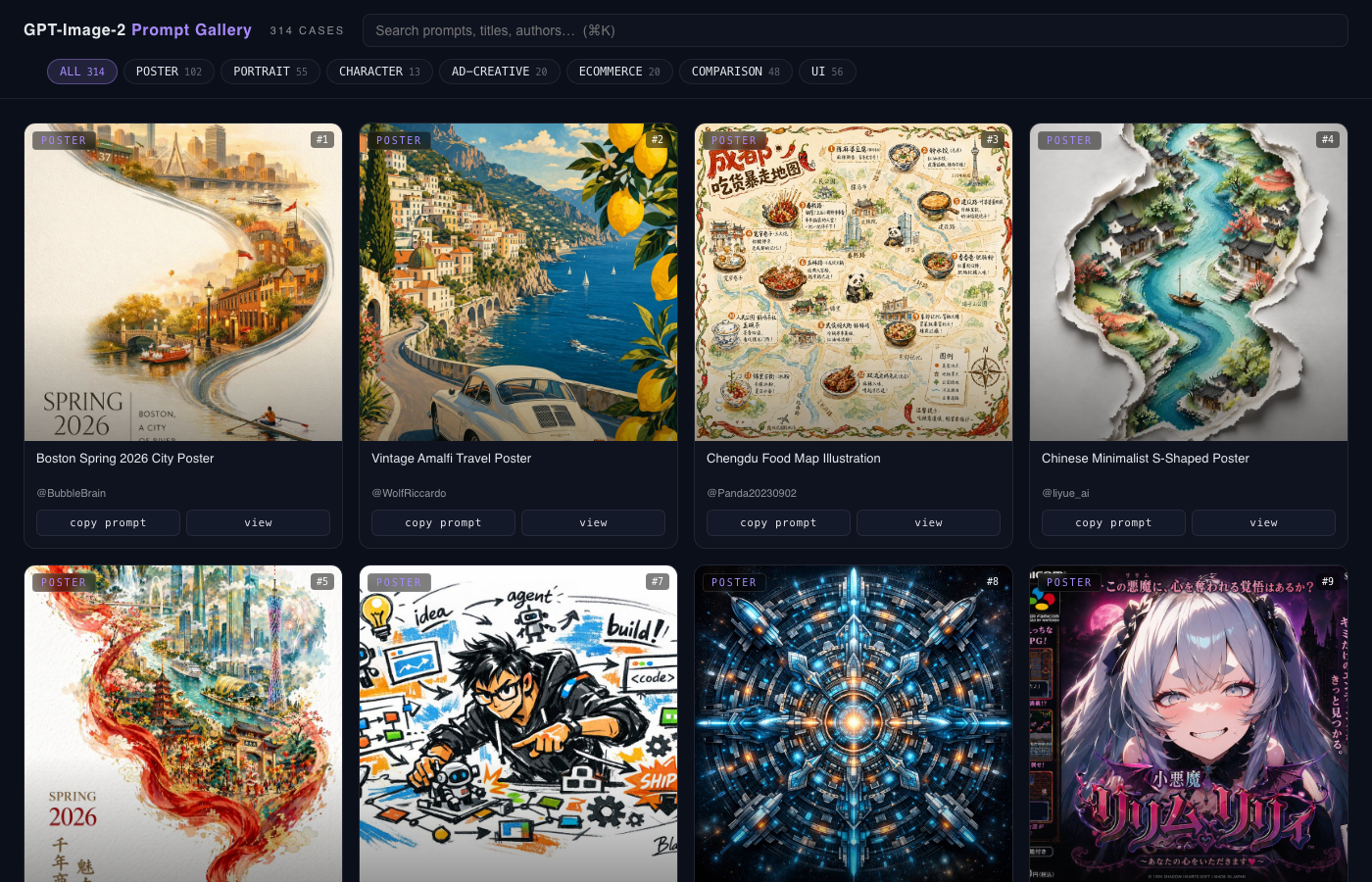

GPT Image 2.0 Prompt Gallery

A searchable static gallery of GPT Image 2.0 prompts and outputs — mostly curated from the upstream awesome-gpt-image-2-prompts repo, with my own prompting research and examples folded in over time.

GPT Image 2.0 Prompt Gallery

A single-file static browser for GPT Image 2.0 (GPT-4o native image gen) prompts and the images they produced. Live at image2prompt.carlfung.dev.

Why this exists

GPT Image 2.0 quietly unlocked a class of AI image generation that earlier models couldn't reliably do. The big one: legible text inside images. Midjourney, Stable Diffusion, even early DALL-E produced mangled, garbled text on signs, posters, packaging, and UI — anything with a word in it required heavy post-editing or just looked broken. GPT Image 2.0 actually renders readable text, and that single capability unlocks a long list of practical workflows: posters, marketing creative, packaging mockups, infographics, e-commerce thumbnails, ad creative, comic panels, and on.

I built this gallery to explore that new design surface — collect strong examples from the community, prototype my own prompts against the same model, and document the patterns that reliably work (and the ones that still don't). The community's awesome list is the starting point; my own experiments in cases-local/ are where I'm figuring out what to actually use it for.

What it is

Mostly a curated, browseable mirror of the upstream awesome-gpt-image-2-prompts collection (Apache 2.0). The upstream is a markdown-only repo — useful as a list, hard to actually search and skim. This site re-renders every case as a card you can filter, search, and copy from.

My own additions

This isn't a passive mirror — I add my own prompts and prompting research as I work with the model. Two skills wire it up: image2prompt-add drops a local case into cases-local/, image2prompt-contribute promotes strong locals into a PR upstream. Local cases stay even if they don't make it upstream, so the live gallery is always upstream + my own notebook.

How it's built

build.py clones the upstream repo, parses every cases/<category>.md file, merges in cases-local/, inlines the data into template.html, and emits one static index.html. No JS framework, no runtime fetches. A launchd plist runs refresh.sh on a schedule — pulls upstream, rebuilds, commits, pushes — Vercel redeploys. The gallery stays current with zero manual effort.

Stack

| Layer | Tool |

|---|---|

| Build | Python (stdlib only) |

| Output | single index.html, ~600KB |

| Search | vanilla JS, in-memory |

| Hosting | Vercel (static) |

| Refresh | launchd cron · auto-commit + push |

Credits

Upstream cases © their respective authors via EvoLinkAI/awesome-gpt-image-2-prompts (Apache 2.0). Each card preserves the author handle and links to source.