Fortune Teller — Cosmic Oracle

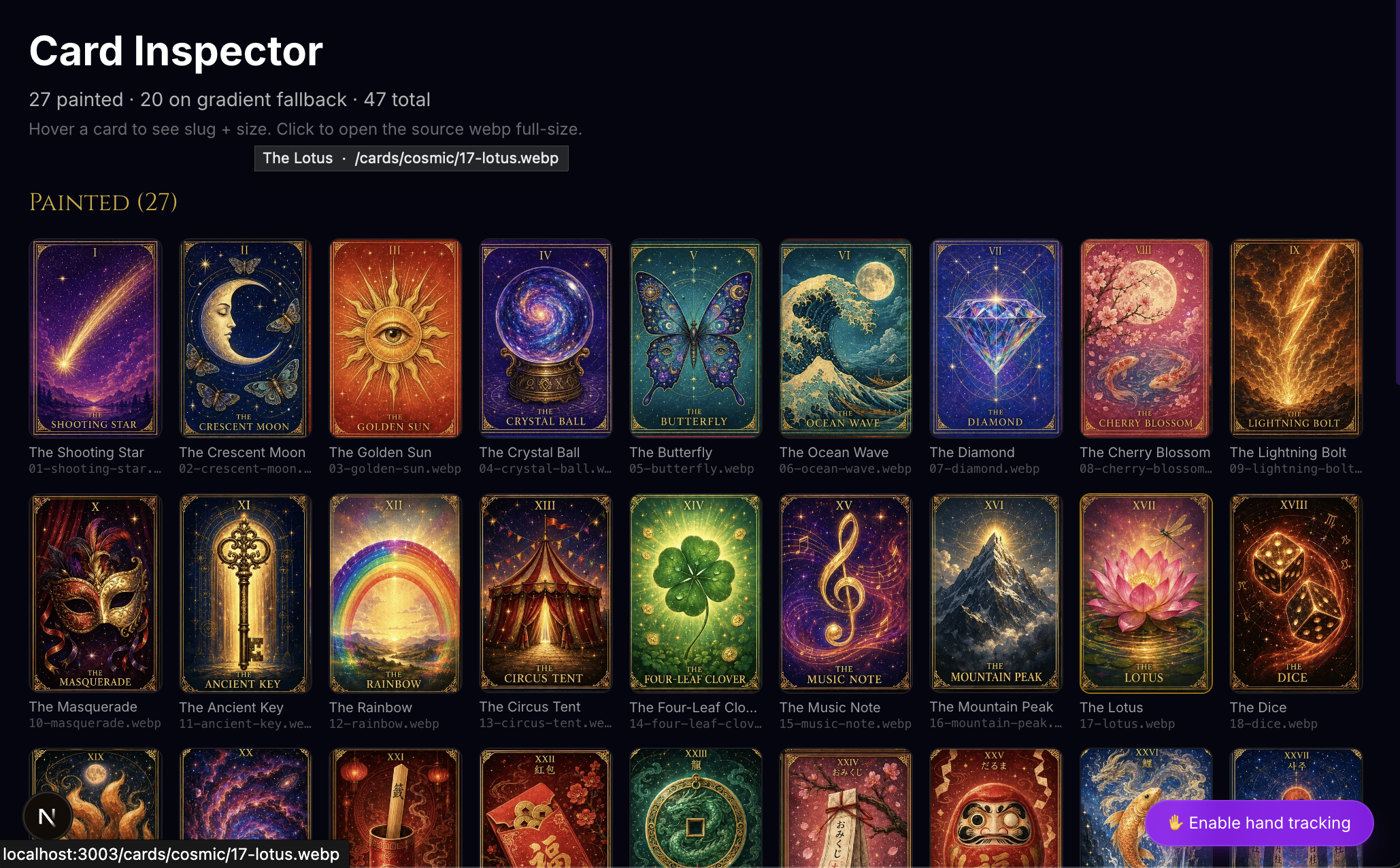

A multi-tradition divination web app — 8 cultures, 48 fortunes, 27 painted tarot cards from ChatGPT Image 2.0, and a webcam-driven hand-gesture engine that lets you pinch a card out of the air.

Cosmic Oracle — Fortune Teller

A multi-tradition divination playground for the web — eight cultures, 48 fortunes, and a webcam-driven hand-gesture engine layered over the whole site so you can pinch a tarot card out of the air instead of clicking it. Live at fortune.carlfung.dev.

What it does

| Tradition | UI | Source |

|---|---|---|

| Cosmic Oracle (Tarot) | 5-card spread, 27 painted cards | ChatGPT Image 2.0 |

| 求籤 (Chinese fortune sticks) | Shake-to-draw stick | — |

| おみくじ (Japanese temple fortune) | Box-tilt animation | — |

| 사주 (Korean Four Pillars) | Birth-date input → 4-pillar reading | — |

| Kahve Falı (Turkish coffee) | Cup-rotation reveal | — |

| World Oracle | Mystic orb | — |

| Norse Runes | 3-rune cast | — |

| ज्योतिष (Vedic Stars) | Star wheel | — |

Every interaction works with a mouse, a touch, or — once you click "Enable hand tracking" — a pinch in front of your webcam.

The meta image

The whole project is exactly that picture: half mystical, half computer vision. The site looks like a divination app and reads gestures like a research demo.

How it works

Gestures. A 380-line GestureCursor component owns a @mediapipe/tasks-vision HandLandmarker, smooths 21 landmarks per hand into a cursor position, and detects five gestures from pure geometry — pinch, wipe-left, index swipe-up, one-hand circle, two-hand clap. Pinch fires elementFromPoint().click() on whatever's under the cursor; macro gestures fire CustomEvents on window that components subscribe to. Existing framer-motion whileHover animations work unchanged because the engine dispatches synthetic pointerenter/pointerleave each frame.

Cards. The 27 tarot illustrations came out of three ChatGPT Image 2.0 prompts — nine cards per 1024×1536 sheet — sliced apart by an 80-line sharp script that auto-detects seams using a window-difference RGB scan, with per-sheet manual overrides for stubborn cuts.

Onboarding. A 5-step gesture tutorial activates on first camera-on (pinch → wipe-left → circle → index-up → clap), persisted to localStorage so it never reappears.

Tech Stack

| Layer | Technology |

|---|---|

| Framework | Next.js 15 (App Router, React 19) |

| Styling | Tailwind CSS 4, Cinzel + Inter |

| Animation | Framer Motion 12 |

| Computer vision | @mediapipe/tasks-vision (HandLandmarker, WASM + GPU delegate) |

| Image generation | ChatGPT Image 2.0 (cards), Imagen 4 Ultra (hero art via Vertex AI) |

| Image processing | sharp (Node) — 80-line manifest-driven slicer |

| Hosting | Vercel + carlfung.dev wildcard subdomain |

Build Log

- Pinching a Tarot Card With My Hand: A Web Gesture Engine in 380 Lines — the full story: MediaPipe vs. the alternatives, pinch from pure geometry, the synthetic-pointer-event trick that lets the existing site react to gestures without a rewrite, the 27-card Image 2.0 pipeline, and the universal gesture vocab extracted as a cross-project design system.

Try it

- Open fortune.carlfung.dev on a desktop browser.

- Click the bottom-right ✋ Enable hand tracking pill.

- Grant camera permission.

- Pinch a card — your fortune awaits.

There's a /gesture-lab route too, if you want to see the raw landmarks and live pinch confidence.